🇺🇸 White House Orders Federal Ban on Anthropic

What happened

The U.S. government just pulled the plug on Anthropic. On February 27, President Trump directed every federal agency to immediately cease using the company’s AI technology, declaring the government “will not do business with them again.” The Pentagon was given six months to phase out existing deployments.

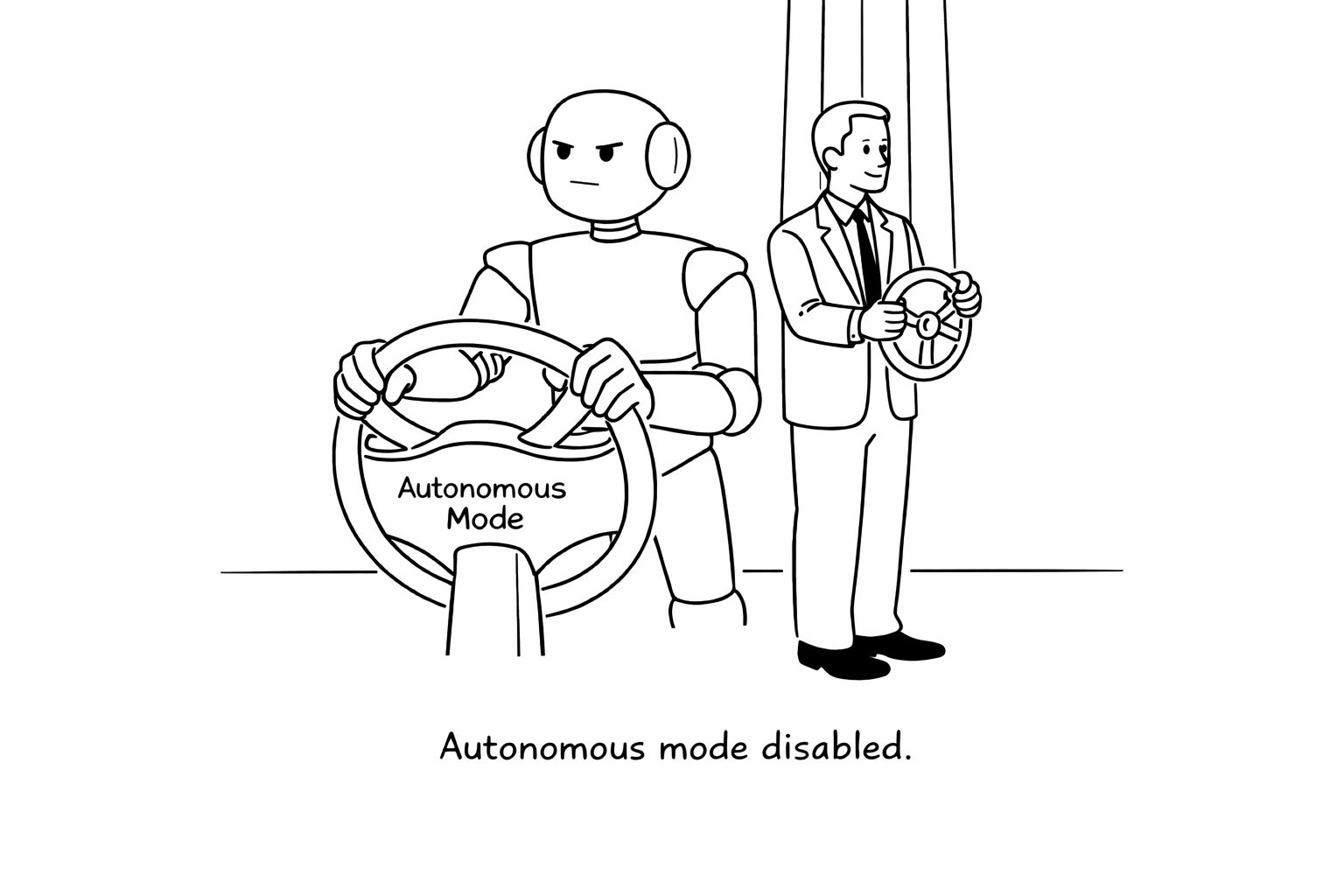

The move follows a months-long dispute over how Anthropic’s models could be used in military settings. The company sought contract language prohibiting use of its AI in fully autonomous weapons or mass domestic surveillance. Defense Secretary Pete Hegseth responded by designating Anthropic a national-security supply-chain risk — a label typically reserved for adversarial foreign firms — and ordered defense contractors to cut commercial ties.

Why it matters

This is the first time a frontier AI lab has been effectively blacklisted by the U.S. government. The designation threatens Anthropic’s Pentagon contracts (worth up to $200 million) and could ripple into broader public-sector and defense-industry partnerships.

More importantly, it sets a precedent: can AI companies impose guardrails on how governments use their models — or does national security override model-level restrictions?

What’s next

Anthropic says the designation is legally unsound and plans to challenge it in court. Competitors supplying AI to defense agencies will now face pressure to clarify their own red lines.

This isn’t just a procurement dispute. It’s a power struggle over who ultimately controls frontier AI systems once they leave the lab.

🤖 Enterprise AI Agents Are Quietly Becoming Digital Employees

What happened

Anthropic recently launched customizable enterprise plugins that allow its Claude model to act directly inside business software — from spreadsheets to email — executing tasks instead of just suggesting them. Around the same time, Perplexity unveiled “Perplexity Computer,” a system that orchestrates 19 different AI models to autonomously run complex, multi-step workflows over hours or even months.

Instead of answering prompts, these systems break goals into subtasks, spin up specialized sub-agents, and operate across files, APIs, and browsers with limited human supervision.

Why it matters

This marks a shift from AI assistants to AI operators. The interface is no longer a chat window — it’s your workflow.

As orchestration layers mature, companies may deploy persistent AI “employees” that manage reporting, research, analysis, and documentation across tools. The economic implications are larger than incremental productivity gains.

What’s next

Expect competition to intensify around model orchestration, auditability, and enterprise guardrails. The companies that win won’t just have better models — they’ll control the systems that coordinate them.

🏭 AI Moves Deeper Into Heavy Industry

What happened

Freeport-McMoRan is deploying AI-driven autonomous haulage systems across its mining operations in Arizona and Indonesia. The systems optimize routing, fuel use, and maintenance scheduling without human intervention, delivering measurable improvements in efficiency and safety.

The company has also integrated AI-powered ore sorting, predictive maintenance via digital twins, and real-time environmental monitoring to reduce energy use and downtime.

Why it matters

Physical AI is leaving the lab and entering capital-intensive, real-world operations. This isn’t a demo — it’s production infrastructure.

When AI improves throughput, safety, and emissions simultaneously, adoption accelerates. Mining, logistics, manufacturing, and energy are next in line.

What’s next

Expect more autonomous fleets, AI-optimized processing plants, and tighter integration between sensor networks and predictive models. The frontier of AI isn’t just digital — it’s industrial.

🤖 Google Integrates Intrinsic to Scale Robotics AI

What happened

Google announced it’s bringing Intrinsic, its robotics software arm, into the core Google organization. Intrinsic’s Flowstate platform lets developers build robotic applications without heavy custom code. The move will see Intrinsic collaborating closely with Google DeepMind and leveraging Gemini AI models and Google Cloud, while remaining a distinct group within Google.

Why it matters

This marks a strategic shift: Google is making physical AI and robotics a foundational part of its business, not just an experimental side project. By combining Intrinsic’s robotics tools with Google’s AI and infrastructure, the company aims to accelerate real-world deployment of robotics in manufacturing and logistics—directly challenging Amazon and Tesla.

What’s next

Expect faster, broader adoption of robotics in industry, with Google aiming to make advanced physical AI accessible to more businesses and developers.

🦾 BMW Deploys Humanoid Robots in Leipzig Plant

What happened

BMW announced it’s deploying Hexagon’s AEON humanoid robots at its Leipzig plant for assembling components and high-voltage batteries. This follows a successful pilot with Figure AI’s Figure 02 robot, which worked ten-hour shifts for ten months, moving over 90,000 parts and helping produce 30,000+ X3 crossovers.

Why it matters

BMW’s move shows humanoid robots are now delivering real value in demanding, repetitive manufacturing tasks. The company aims to relieve workers from monotonous or ergonomically tough jobs, improve safety, and boost competitiveness. This is part of a broader trend, with Mercedes-Benz and Hyundai also ramping up humanoid robot deployments.

What’s next

BMW will continue piloting AEON robots and may expand their use across more production lines, potentially setting a new standard for automation in the auto industry.

🛡️ Agentic AI Sprawl Raises Security Concerns

What happened

A widely discussed analysis warned that the rapid spread of agentic AI (“agentic AI sprawl”) could outpace enterprise security teams’ ability to manage and govern all autonomous agents in their environments.

Why it matters

As organizations deploy more agentic AI for workflow automation, the risk of unmanaged or rogue agents becomes a critical security and compliance issue. The report calls for new frameworks and tools to ensure safe operation at scale.

What’s next

Expect a surge in demand for agent management and observability tools, as well as new governance protocols for agentic AI.

🖥️ Perplexity Computer: Safer Agentic AI Platform Announced

What happened

Perplexity announced Perplexity Computer, a new agentic AI platform inspired by OpenClaw but designed for enhanced safety. Unlike previous agentic tools that run locally, Perplexity Computer operates entirely in a controlled cloud environment.

Why it matters

This addresses a key concern: the risk of unpredictable agent behavior or data access when running locally. By shifting execution to the cloud, Perplexity aims to provide enterprise-grade safety and compliance for agentic workflows.

What’s next

Perplexity plans to expand the platform with more orchestration features and compliance certifications, targeting enterprise customers seeking safe, scalable agentic AI.

💡The Bottom Line

AI has entered its control era — where power, governance, and deployment matter more than raw model performance. The winners won’t just build smarter systems; they’ll control how they’re used at scale.