Agentic AI

🤖 OpenAI hardens the agent stack for real work

What happened

OpenAI updated its Agents SDK with a model-native harness and native sandbox execution, letting agents inspect files, run commands, edit code, and handle longer multi-step tasks inside controlled workspaces; the new capabilities are generally available through the API.

Why it matters

This is a shift from “agent demos” to production plumbing: OpenAI is standardizing the execution layer enterprises actually need, especially around safety, durability, and long-horizon work.

What’s next

OpenAI says TypeScript support is coming later, along with additional capabilities like code mode and subagents, which points to a broader push to make agent infrastructure more turnkey across stacks.

🛡️ AI attacks speed up. IBM responds with AI defense

What happened

IBM launched a new cybersecurity assessment for frontier-model threats and introduced IBM Autonomous Security, a multi-agent service designed to automate vulnerability remediation and coordinated response at machine speed.

Why it matters

IBM is effectively saying the security market has crossed a threshold: if attackers are using frontier AI to accelerate the full attack lifecycle, fragmented human-led security operations are no longer enough.

What’s next

Expect more enterprise security vendors to reframe their products around coordinated autonomous response, because IBM’s core argument is that defensive advantage now comes from how quickly and coherently systems act together.

Generative & Enterprise AI

🗣️ Google upgrades AI speech from readable to directable

What happened

Google introduced Gemini 3.1 Flash TTS, a new text-to-speech model with improved quality, finer expressive control through natural-language audio tags, support for more than 70 languages, and SynthID watermarking; it is rolling out in preview via the Gemini API, Google AI Studio, Vertex AI, and Google Vids.

Why it matters

This pushes enterprise voice AI up the stack: the differentiator is no longer just sounding natural, but being controllable, multilingual, and safe enough for productized use across apps and media workflows.

What’s next

Because Google is shipping the model through both developer and enterprise channels on day one, expect faster adoption in customer support, media production, training, and other speech-heavy workflows.

🎨 Adobe turns Creative Cloud into a conversational interface

What happened

Adobe unveiled Firefly AI Assistant, powered by its new creative agent, which lets creators describe an outcome in plain language while the system orchestrates multi-step workflows across Firefly, Photoshop, Premiere, Lightroom, Express, Illustrator, and more.

Why it matters

Adobe is moving creative AI beyond generation into orchestration: the product is designed to collapse app-switching and tool complexity without taking creative control away from the user.

What’s next

Adobe says Firefly AI Assistant will enter public beta in the coming weeks, and it also plans to extend this agentic workflow model into third-party surfaces including Anthropic’s Claude.

🤝 GitLab and Google Cloud Deepen Vertex AI Integration for DevSecOps

What happened

GitLab and Google Cloud expanded their partnership, enabling direct access to Vertex AI models, including Gemini within the GitLab Duo Agent Platform. Enterprise teams can now run AI-powered development and security workflows on existing cloud infrastructure with full governance and auditability.

Why it matters

This integration streamlines AI adoption for software teams, making advanced models accessible without disrupting established processes.

What’s next

Expect faster, safer software delivery as AI becomes a seamless part of enterprise DevSecOps pipelines.

🦾 Mallory Launches AI-Native Threat Intelligence Platform

What happened

Mallory debuted an AI-native threat intelligence platform for security teams, using LLMs and agentic workflows to deliver real-time, prioritized, evidence-based threat cases rather than generic alerts. The platform integrates with existing security tools and operationalizes generative AI for enterprise cybersecurity.

Why it matters

This marks a shift from alert fatigue to actionable intelligence, helping security teams respond faster and more effectively to real-world threats.

What’s next

Watch for more security vendors to embed generative and agentic AI into their platforms, raising the bar for cyber defense.

Physical AI

🏭 Accenture bets on the robot orchestration layer

What happened

Accenture invested in General Robotics and said the two companies will work together to help manufacturers, logistics operators, and other asset-intensive industries advance autonomous operations with physical AI; General Robotics’ GRID platform connects robots across OEMs through modular AI skills, cloud orchestration, and simulation-based training.

Why it matters

The biggest bottleneck in industrial robotics is increasingly interoperability, not hardware alone; if one intelligence layer can coordinate mixed fleets, physical AI gets much easier to deploy at enterprise scale.

What’s next

Accenture is positioning this as part of a broader “hybrid agentic, physical, and human workforce,” which means the next phase of industrial AI will be judged on orchestration, safety, and repeatable rollout across facilities.

🐕 Spot gets smarter eyes and better judgment

What happened

Robotics & Automation News reports Boston Dynamics integrated Google’s Gemini and Gemini Robotics-ER 1.6 into Spot’s Orbit inspection stack, enabling the robot to move beyond basic detection toward higher-order reasoning, including reading gauges, thermometers, and sight glasses in industrial settings.

Why it matters

That’s a meaningful upgrade for physical AI: industrial inspection becomes less about hard-coded checks and more about contextual understanding, which is exactly what makes robots more useful in messy real-world environments.

What’s next

Boston Dynamics says the Gemini-powered system is already live for existing customers on the platform, so the near-term test is whether reasoning-based inspection can prove reliable enough to expand beyond narrow pilot workflows.

🤖 Agibot G2 Humanoid Robots Hit Chinese Factory Floors

What happened

Agibot’s G2 humanoid robots have been deployed at Longcheer Technology’s Shanghai facility—the world’s first live industrial-scale use of humanoids in electronics manufacturing. The G2s handle high-precision loading and unloading with 99%+ success rates and 24/7 autonomous operation.

Why it matters

This is a milestone for embodied AI, moving robots from pilot projects to economically valuable, real-world production roles.

What’s next

Agibot plans to scale to 100 robots by Q3 2026 and expand into automotive and semiconductor sectors, signaling rapid adoption of humanoid automation.

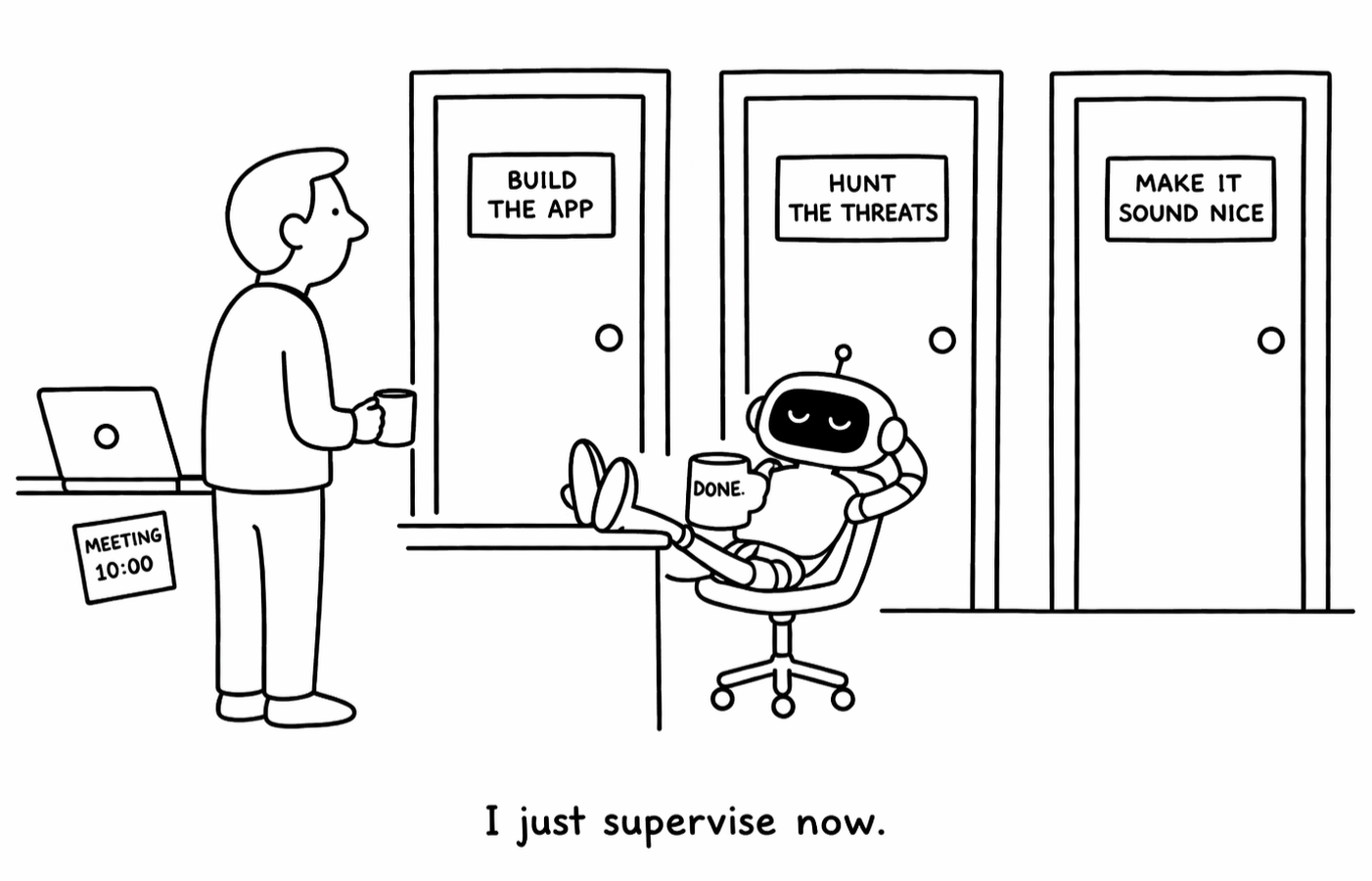

💡 Bottom Line

Agents are moving from demos to real systems, taking on core workflows across development, security, and operations. As they do, control is consolidating with the platforms that embed, orchestrate, and govern them.

⚙️ Try It Yourself

Pick one workflow you touch—coding, security, or content.

Use an agent-style tool (You.com, ChatGPT, Gemini, or Claude) to run a multi-step task (write → review → refine).

Add a second pass focused on defense (ask it to find vulnerabilities, errors, or risks).

Compare how the system performs when it’s doing the work vs just assisting.

You’ll see the shift: agents aren’t helping anymore—they’re starting to operate.