Agentic AI

💳 Meow tries to become the bank layer for autonomous agents

What happened

Meow Technologies launched what it calls an “agentic banking platform,” letting AI agents open business bank accounts, issue cards, send payments, and manage account activity; it also says it supports major agent tools (Claude, ChatGPT, Cursor, Gemini).

Why it matters

Agentic workflows have been getting permissions in SaaS, but money has been the “human-only” exception—this is a direct attempt to turn banking into a machine-invocable API surface.

What’s next

Meow says it’s built on a permissioned architecture (initiator/approver workflows, limits, role-based permissions, audit logs) and exposes an MCP endpoint—expect “agent finance” to standardize around MCP-style connectors plus enterprise approval policies.

🧰 Anthropic ships managed agent infrastructure as a product

What happened

Anthropic launched Claude Managed Agents, positioned as a cloud service that handles sandboxing, orchestration, and governance for production agents—adding managed hosting, scaling, monitoring, and error recovery (with “millisecond-level billing” called out).

Why it matters

Enterprise teams don’t just need a model—they need the operational scaffolding (secure execution, permissions, telemetry, recovery). Packaging that stack shifts competition toward “agent platforms,” not just “agent prompts.”

What’s next

Anthropic is framing this as a strategic move into the ops layer (and into a crowded field with major cloud platforms), so expect faster iteration on agent governance features and tighter integration with Anthropic’s broader ecosystem choices.

Generative & Enterprise AI

🏗️ CoreWeave signs a multi-year Anthropic deal as the GPU landlord play accelerates

What happened

CoreWeave announced a multi-year agreement with Anthropic to provide Nvidia GPU capacity in US data centers for “production-scale” workloads, with financial terms undisclosed; CoreWeave also claims its platform now includes nine of the ten leading AI model providers.

Why it matters

This is the market’s current structure in one story: demand is outpacing hyperscaler supply, so specialized GPU clouds are locking in long-duration contracts—and the contracts are becoming the strategic asset (not spot capacity).

What’s next

TNW reports CoreWeave’s 2025 revenue ($5.13B), 2026 guidance (>$12B), and backlog (>$66B) as signals of how fast “AI infrastructure companies” are becoming mega-scale businesses—expect more multi-year compute procurement deals to be treated like critical supply chain agreements.

🧩 Khoros rebuilds enterprise community software around auditable AI agents

What happened

Khoros launched Aurora AI, positioning it as a new, “AI-native” community platform; it says Aurora AI launches with three AI agents in beta (Answer Assist, AI Moderation, Orchestrator) and emphasizes “grounded and auditable” behavior (citations + audit trails).

Why it matters

Enterprise adoption is shifting from “add an assistant” to “re-platform the workflow”: the winners are embedding AI into routing, moderation, and automation with compliance-friendly artifacts (sources, logs, determinism).

What’s next

Khoros also announced free migrations through Dec 31, 2026—an aggressive move to pull customers onto the new architecture quickly, which is where the AI roadmap lives.

🦙 Meta Unleashes Llama 4: Open-Weight, Natively Multimodal LLMs Go Live

What happened

Meta released Llama 4 Scout and Llama 4 Maverick, the first open-weight, natively multimodal models with a massive 10 million token context window and a mixture-of-experts (MoE) architecture. The flagship Llama 4 Behemoth, boasting 288 billion active parameters, outperforms GPT-4.5 and Gemini 2.0 Pro on STEM benchmarks and supports seamless integration of text and vision across 200 languages.

Why it matters

Llama 4’s open release and multimodal capabilities set a new bar for both research and enterprise adoption, making advanced AI more accessible and customizable than ever. This move is poised to accelerate open-source innovation and democratize cutting-edge AI for developers and businesses worldwide.

What’s next

Expect a surge in open-source projects, rapid enterprise experimentation, and new benchmarks for multimodal AI performance as the community races to build on Llama 4’s foundation.

Physical AI

🦿 Faraday Future pushes “robot-as-a-chat-contact” plus no-code skill building

What happened

Faraday Future released a demo showing its FX Aegis quadruped robot autonomously completing a food delivery task, and says it integrated the open-source OpenClaw framework so the robot can function as a “contact” in messaging apps for tasking and real-time updates.

Why it matters

This is a practical interface shift for embodied AI: if robots become message-addressable endpoints (like a teammate in chat), and skills can be built with no/low-code “conversational instructions,” deployment friction drops sharply.

What’s next

Faraday Future explicitly points to “world memory” to evolve from responding to instructions toward proactively identifying tasks—expect more robotics vendors to market long-term memory + agent layers as the differentiator, not just locomotion.

🤖 Humanoid Robots Take Center Stage at SusHi Tech 2026

What happened

SusHi Tech 2026 in Tokyo is putting robotics in the spotlight, with live demos of humanoid robots and sessions on autonomous driving, cyber defense, and climate tech. The event is positioning embodied AI as a core driver of the next wave of societal transformation, with a focus on real-world deployments and capability showcases.

Why it matters

This marks a high-visibility moment for physical AI, signaling that robotics and embodied intelligence are moving from experimental projects to foundational technologies with real-world impact. Public demos at a major tech event highlight accelerating progress and growing mainstream interest.

What’s next

Look for increased investment, new partnerships, and more frequent public demonstrations as humanoid and industrial robots move into pilot deployments and everyday settings.

🦾 Snap Partners with Qualcomm for Next-Gen AI Smart Glasses

What happened

Snap’s smart glasses unit, Specs, announced a multi-year deal with Qualcomm to power its upcoming AI-enabled glasses—Snap’s first major move in the physical AI space since forming the unit earlier this year.

Why it matters

The partnership fuses Snap’s augmented reality ambitions with Qualcomm’s AI hardware, signaling a push toward more capable, real-world AI-physical integrations. Consumer-grade embodied AI devices are inching closer to mainstream adoption, with Snap aiming to lead the charge.

What’s next

Snap is expected to accelerate development and tease new AI glasses, with industry watchers anticipating further announcements on features and deployment timelines.

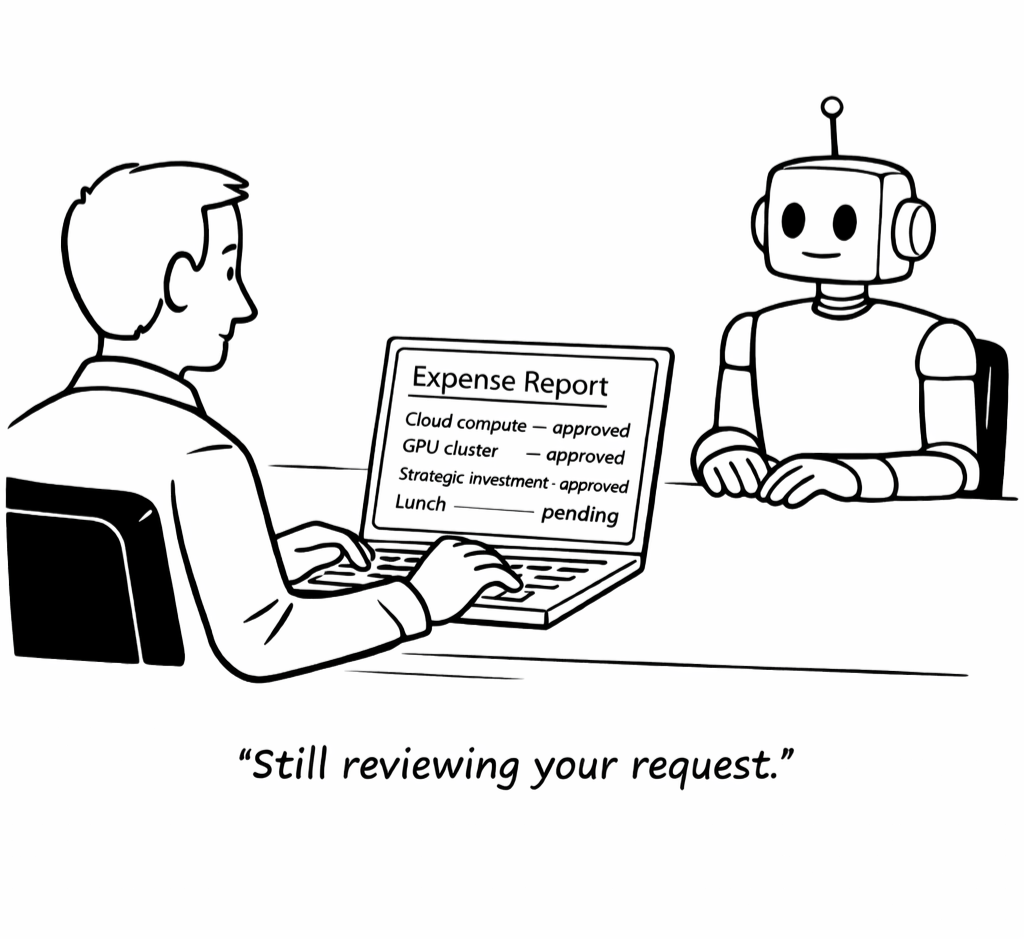

💡 Bottom Line

Agents are crossing a critical threshold—from executing workflows to operating inside real systems with money, infrastructure, and physical impact. As they gain access, the real battleground shifts to control: permissions, governance, and who owns the stack.

⚙️ Try It Yourself

Want to feel where this is going?

Run a simple “agent with authority” experiment:

In You.com, ChatGPT, or Claude ask it to plan a purchase (ex. “Find and compare the best red light therapy devices under $1,000”)

Then extend it:

“Now outline the exact steps to buy, including payment, tracking, and follow-up”

Finally:

“What permissions, approvals, or safeguards would you need to actually execute this?”

You’ll quickly see the gap, agents can already decide but the system to safely let them act is still being built.

That gap is where the next wave of platforms (and power) will emerge.