Agentic AI

🔐 Microsoft says agent security has to be end-to-end

What happened

Microsoft published “Secure agentic AI end-to-end,” positioning agent-era security as three parallel jobs: secure the agents, secure the foundations (identity/data/workflows), and defend using agents plus humans—alongside specific product capabilities meant to expose and reduce AI risk across an enterprise.

Why it matters

Once agents can call tools and touch data, “prompt hygiene” is not the primary control—visibility, identity gating, and data-loss boundaries are, and Microsoft is explicitly framing this as a first-class security architecture problem.

What’s next

Microsoft highlights a Security Dashboard for AI as generally available, with additional visibility controls called out on specific dates (e.g., Entra Internet Access Shadow AI Detection on March 31 and richer app inventory in May), signaling a near-term push to make “AI-risk telemetry” default.

🧱 Databricks adds 35 agentic risks to its AI Security Framework

What happened

Databricks extended the Databricks AI Security Framework (DASF) to cover Agentic AI as its own system component, adding 35 new agentic AI security risks and 6 mitigation controls, plus specific guidance around memory/planning/tool use and MCP-style tool-server/client threats.

Why it matters

This is the kind of “agent safety” artifact security teams can operationalize: named risks, named controls (least privilege, sandboxing, human oversight), and explicit attention to tool-ecosystem attack surface—exactly where autonomous workflows tend to blow up.

What’s next

If DASF v3.0 becomes a shared checklist, expect agent vendors to be asked for control mappings and MCP tool-server hardening to become table stakes for production deployments—especially in regulated environments.

💬 Anthropic makes Claude Code “always-on” via Telegram + Discord

What happened

VentureBeat reports Anthropic shipped “Claude Code Channels,” enabling a running Claude Code session to receive tasks from messaging apps via official plugins and a --channels session flag—then reply when work is complete.

Why it matters

Messaging turns a coding agent into a background worker you can “page” from anywhere, which is great for throughput—and a governance headache if you don’t treat it like remote execution with permissions, audit logs, and clearly bounded tool access.

What’s next

Because Channels leans on open connector patterns (and a persistent session model), the next competitive wedge is likely more connectors plus tighter enterprise controls (policy, logging, and safer defaults) to make “always-on” feel less like “always-dangerous.”

Enterprise & Generative AI

🧠 Top 9 Large Language Models as of March 2026

What happened

Shakudo published a comprehensive roundup of the leading LLMs as of March 2026, highlighting the release of OpenAI’s GPT-5.2, Meta’s Llama 4, Anthropic’s Claude 4 family, Mistral 3, and Google’s Gemini 3 Pro/Flash, among others.

Why it matters

This list reflects the rapid evolution and fierce competition in the LLM space, with each model pushing the boundaries in context length, multimodal capabilities, and enterprise features.

What’s next

Expect continued rapid iteration, with new benchmarks and specialized models targeting both general and domain-specific applications.

🧳 Mozilla’s Llamafile gets a rebuilt core + GPU acceleration

What happened

Help Net Security reports Mozilla-AI’s Llamafile v0.10.0 is a from-scratch rebuild that restores GPU acceleration and adds a terminal UI, --server mode, and distinct run modes for chat, CLI, and server usage.

Why it matters

The “portable local LLM” trend is shifting from hobby to infrastructure: a single-file runner with GPU support and clean interfaces makes private inference (including air-gapped workflows) more deployable for teams that can’t or won’t ship prompts to the cloud.

What’s next

Llamafile is explicitly pushing beyond text (terminal-accessible multimodal hooks and image input via --image) while also noting missing production features (including sandboxing/security-oriented pieces), so watch for hardening work that makes local GenAI safer by default.

🏁 Infosys + Formula E ship an AI-powered Race Centre with GenAI commentary

What happened

Infosys and Formula E announced an AI-powered Race Centre that blends race feeds with AI-generated commentary and interactive features (predictions/voting, PIT BOOST tracking, and driver event timelines), powered by Infosys Topaz.

Why it matters

Real-time GenAI narration is “live generation under constraints,” and pairing it with data-orchestration agents processing over 1.5 million data points per race is a concrete template for turning GenAI into product infrastructure rather than a standalone creative toy.

What’s next

If engagement metrics look good, expect the pattern to spread across sports: telemetry + generative storytelling + gamified interactivity—while teams invest heavily in correctness, latency, and responsible-AI guardrails to prevent “confidently wrong” commentary at race speed.

🎬 HiDream.ai launches a vertical “Claw” for image + video workflows

What happened

Through Newsfile, HiDream.ai announced HiDreamClaw, positioned as an image-and-video-native agent for creators on its vivago web platform, powered by the company’s HiDream-I1 multimodal model (stated as 10B+ parameters).

Why it matters

The pitch is a clear market shift: creators don’t just want “generate an asset,” they want an agent that fits the full workflow (ideation → copy → polish → output) with packaging around deployment, cost clarity, and security.

What’s next

HiDreamClaw emphasizes cloud sandboxing, physical isolation, and pay-as-you-go credits—so the adoption question becomes whether those constraints read as “safer + predictable” or “locked-in + opaque” compared to DIY model stacks.

Physical AI

🦿 Unitree Robotics files for an IPO on the Shanghai Stock Exchange

What happened

Reuters reports Unitree filed an IPO application seeking to raise 4.2 billion yuan, explicitly framing the move as a test of investor appetite for humanoid robots as a “frontier” category.

Why it matters

IPO filings are a forcing function: they turn hype into disclosures, disclosures into valuation, and valuation into a public benchmark for the entire humanoid-robot cohort.

What’s next

Near term: regulatory review, pricing, and demand discovery; longer term: whether investors see credible paths from limited deployments to repeatable industrial use cases that justify scaling humanoids beyond niche demos.

🧩 Infineon Technologies + NVIDIA bet on digital twins for safer humanoids

What happened

EEJournal reports Infineon is expanding collaboration with NVIDIA on humanoid robot architectures, leaning on digital twins of actuators/sensors inside NVIDIA’s Isaac Sim/Lab and on reference designs pairing Infineon components with Jetson Thor and the Halos AI Systems Inspection Lab.

Why it matters

This is the physical-AI version of “shift-left”: validate perception/control in simulation before hardware integration, and bake security/safety primitives (TPMs, secure boot, encrypted comms, safer OTA patterns) into reference architectures instead of retrofitting them later.

What’s next

Expect more “certifiable robotics stacks” to arrive as semi-standardized reference designs, because scaling humanoids from pilot → fleet requires not just better models, but repeatable safety cases and hardened hardware/software supply chains.

🤝 Fanuc Partners with Nvidia to Accelerate Physical AI in Industrial Robotics

What happened

Fanuc, a global leader in industrial robotics, announced a strategic collaboration with Nvidia to combine AI computing, simulation, and digital twins for smarter, more adaptable automation. The partnership leverages Nvidia’s Jetson edge modules and Isaac Sim platform to enhance Fanuc’s robotics solutions.

Why it matters

This collaboration aims to deliver intelligent automation that can adapt to dynamic factory environments, moving beyond rigid programming to robots that see, reason, and act. It represents a significant step toward scalable, AI-driven manufacturing.

What’s next

Fanuc will integrate Nvidia’s AI infrastructure into its global product line, with pilot deployments expected in advanced manufacturing facilities later this year.

📦 Dematic launches Command Center to unify warehouse ops signals

What happened

Via PR Newswire, Dematic announced Command Center, a vendor-agnostic platform that centralizes real-time monitoring, AI-enhanced decision support, and operational analytics to help warehouses detect emerging issues and diagnose root causes faster.

Why it matters

Warehouse automation is increasingly multi-vendor and multi-system; if your telemetry stays fragmented, “AI optimization” becomes a PowerPoint promise—centralizing signals is the enabling step that lets any intelligence layer actually act.

What’s next

Dematic says it will unveil Command Center at LogiMAT 2026 and showcase it at MODEX 2026, which is also a directional signal: more warehouse intelligence will sit above (not inside) specific machines, orchestrating workflows without forcing rip-and-replace upgrades.

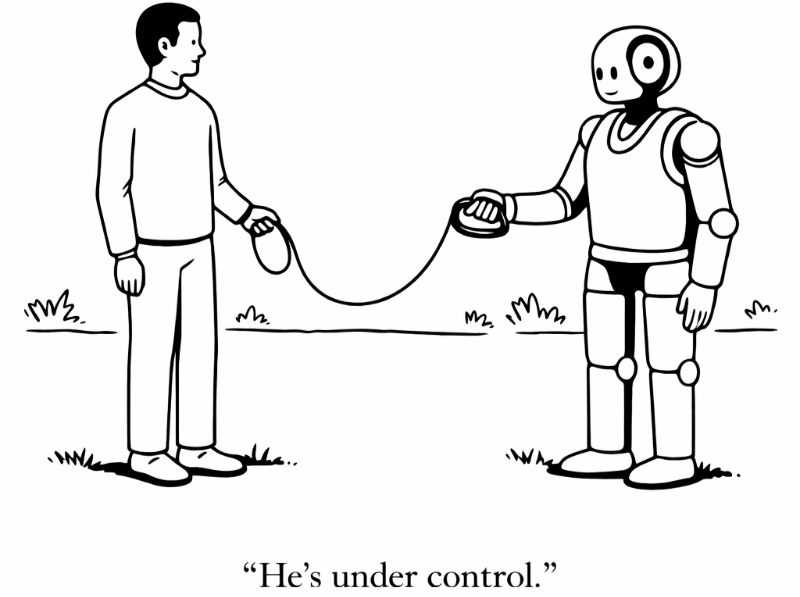

💡 Bottom Line

Security is becoming the control plane for the agentic era. As agents gain access to tools, data, and persistent workflows, the real risk isn’t what they say, it’s what they’re allowed to do. The platforms that win will be the ones that define, enforce, and audit those decisions.

⚙️ Try It Yourself

Pick one task you’d let an agent handle, like updating a ticket or sending an email. Now imagine it runs with full access across your systems.

If it makes one wrong decision, what actually happens?

That answer is your real architecture. You’ll see the shift.

Agents execute. Permissions define the risk.